Autentificarea în ANAF SPV, pe Linux si MacOS, din Firefox, cu certificat pe token

Site-ul ANAF ne întâmpină cu mesajul că facturarea electronică prin e-Factura este obligatorie de la 1 ianuarie 2024. Când vine vorba de ANAF, suportul pentru chestiile astea e mai complicat pe Linux sau MacOS.

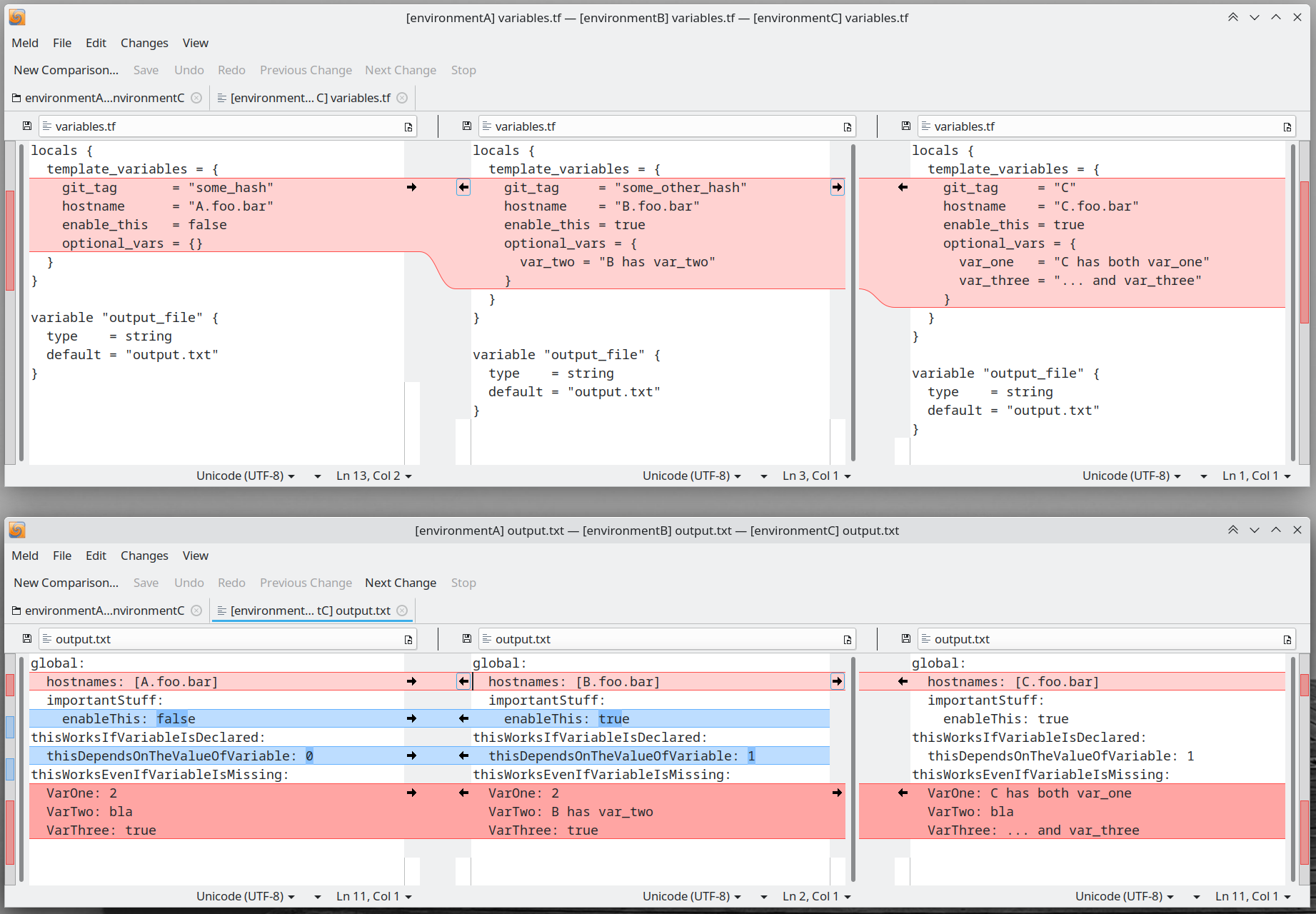

Acest articol este o continuare a articolului precedent în care am exemplificat cum se poate realiza autentificarea din Chrome, pe Linux

Token-ul și Semnătura Digitală

Voi folosi același kit cu CryptoID de la CertDigital. Acel mToken Crypto ID este făcut de LONGMAI. Descărcați de la ei de pe site:

- Aplicație semnare CDP Client

- si driver-ul mToken Crypto ID

... pentru Linux, respectiv MacOS.

Aplicația de semnat documente e scrisă în Java și e simplu de folosit. Știe să găsească token-ul atașat calculatorului, să-i ceară PIN-ul și, apoi, să semneze cu semnatura de pe token o listă de PDF-uri pe care o selectezi.

Autentificarea în SPV, pe Linux, cu Firefox

Pașii de mai jos nu funcționează dacă Firefox e instalat din snap. Există un bug deschis la Snapcraft pentru asta și altul la Mozilla. Pentru că folosesc Kubuntu, am instalat Firefox din apt repository-ul celor de la Mozilla (deci dintr-un .deb). Nu am testat cu Firefox instalat din Flatpak.

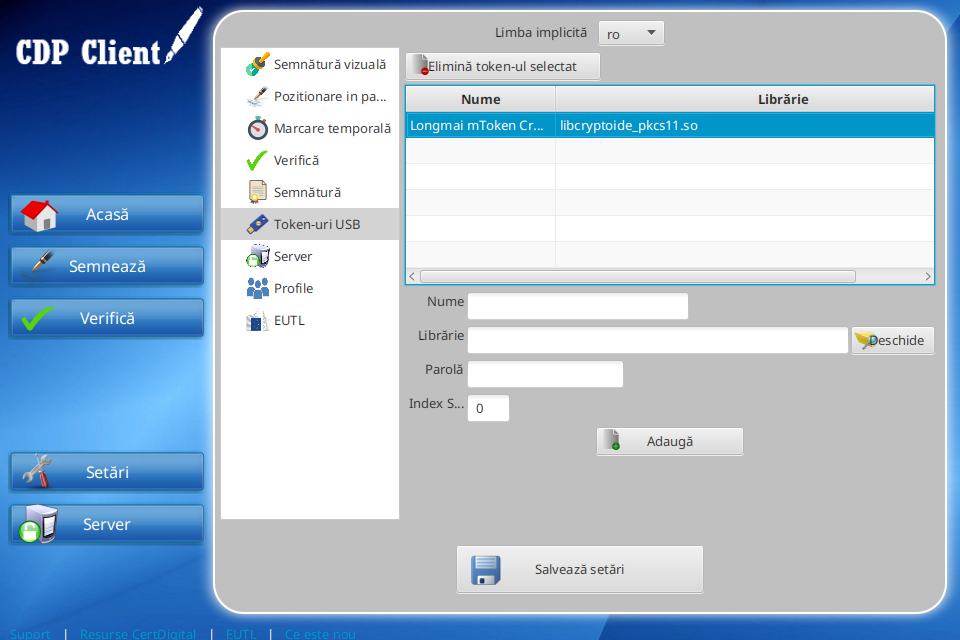

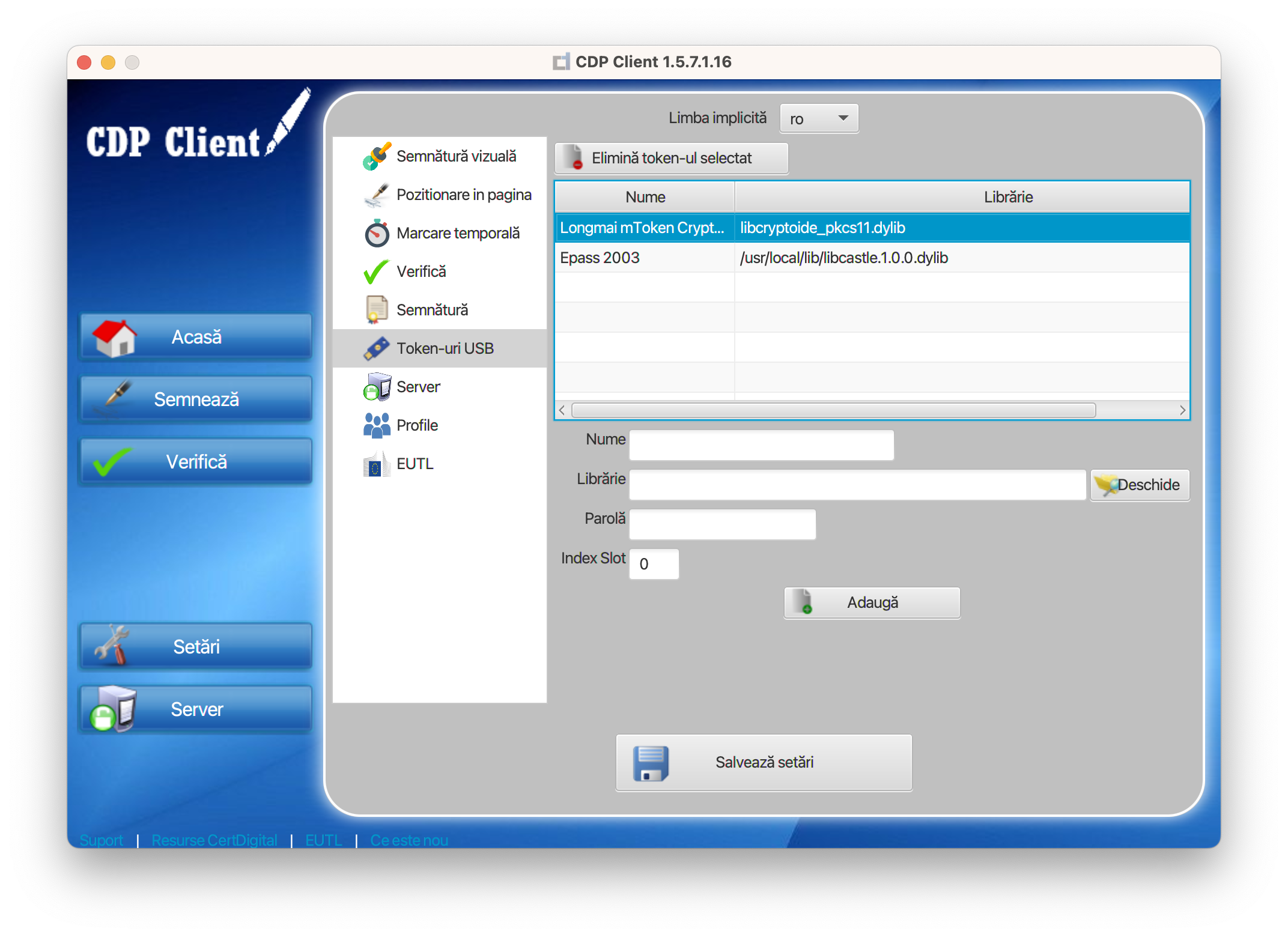

Aplicația CDP Client ne dă un indiciu despre unde se află librăria lui:

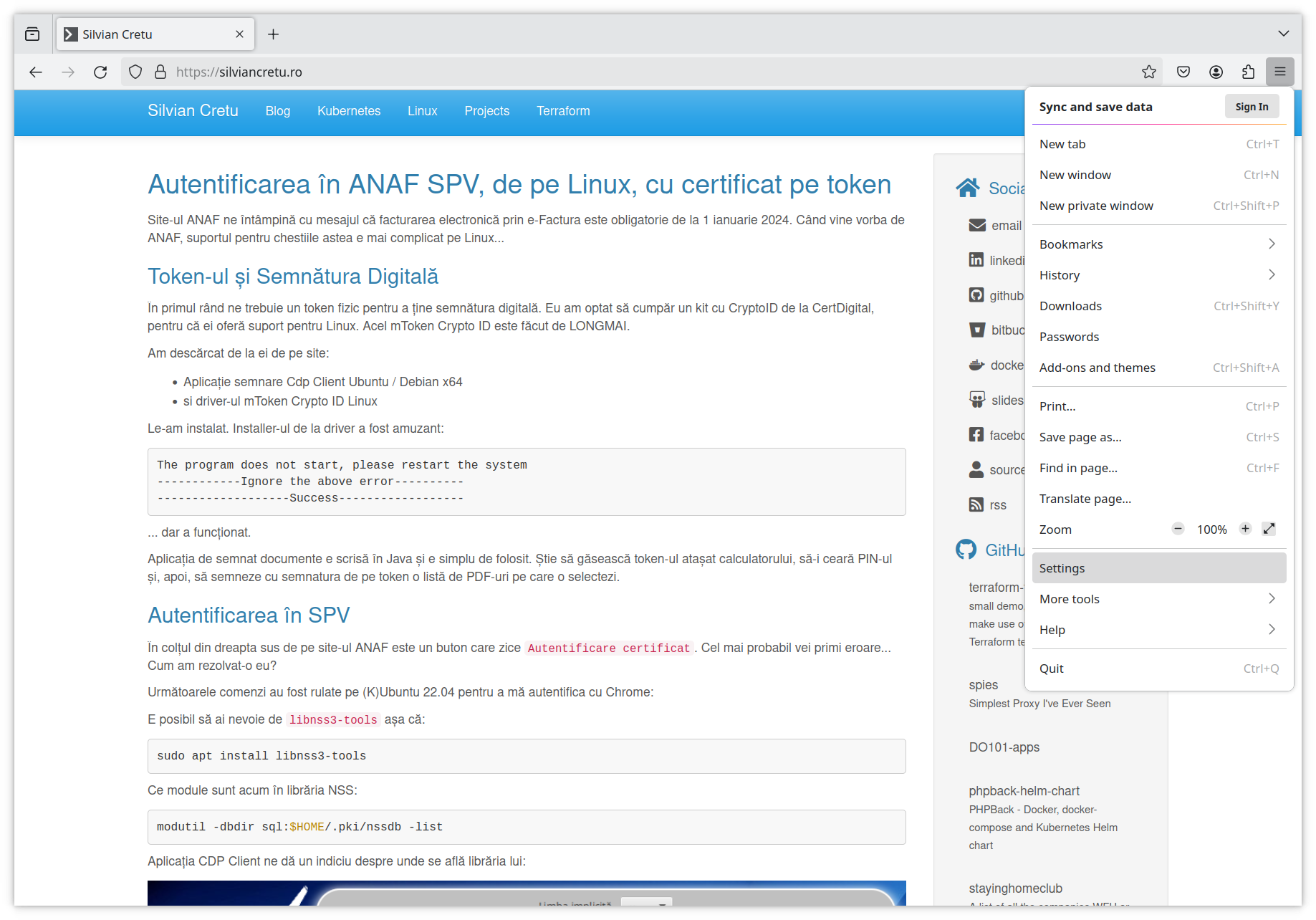

Din Firefox, deschide fereastra Settings din meniul hamburger din dreapta sus.

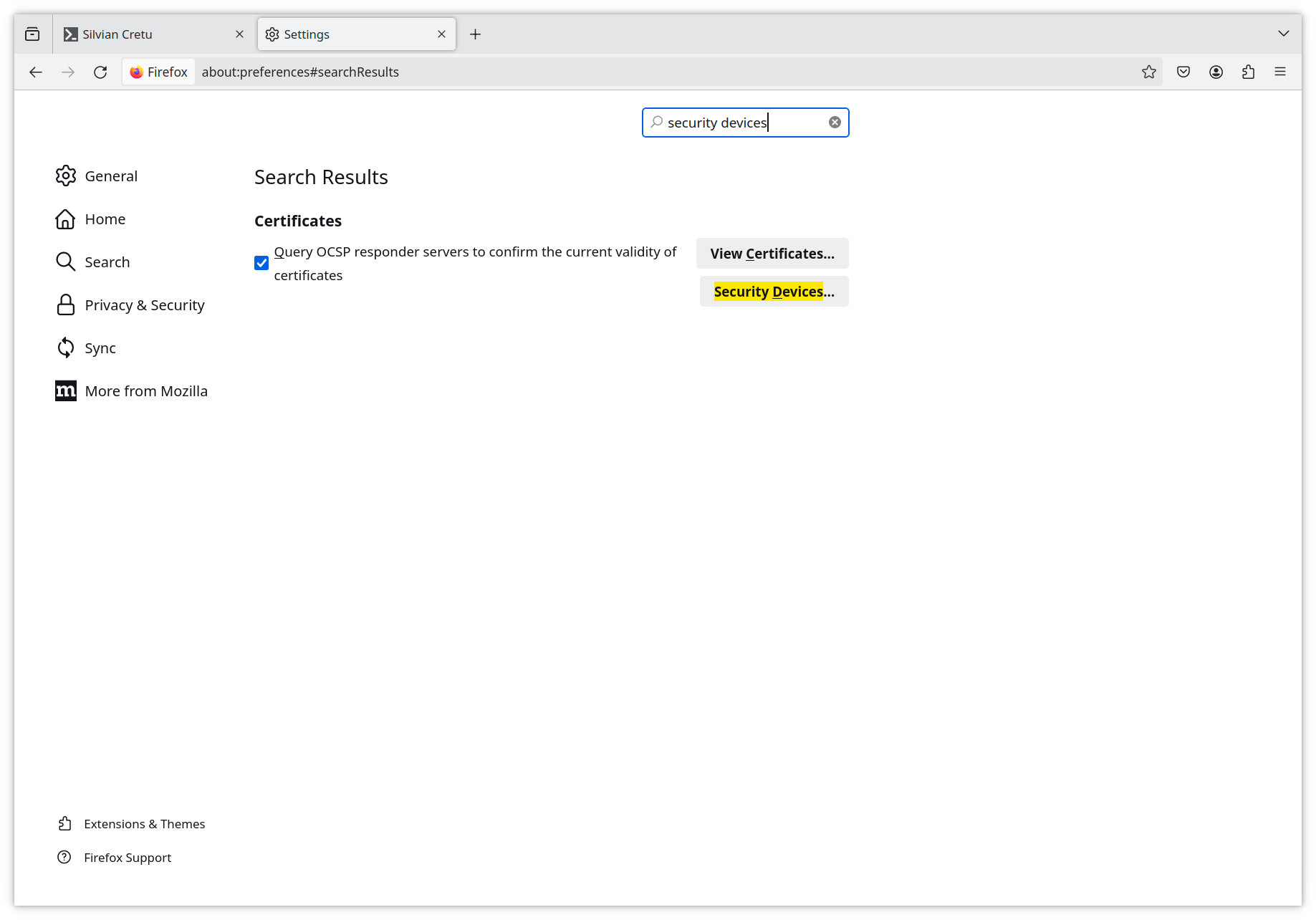

Acolo caută Security Devices.

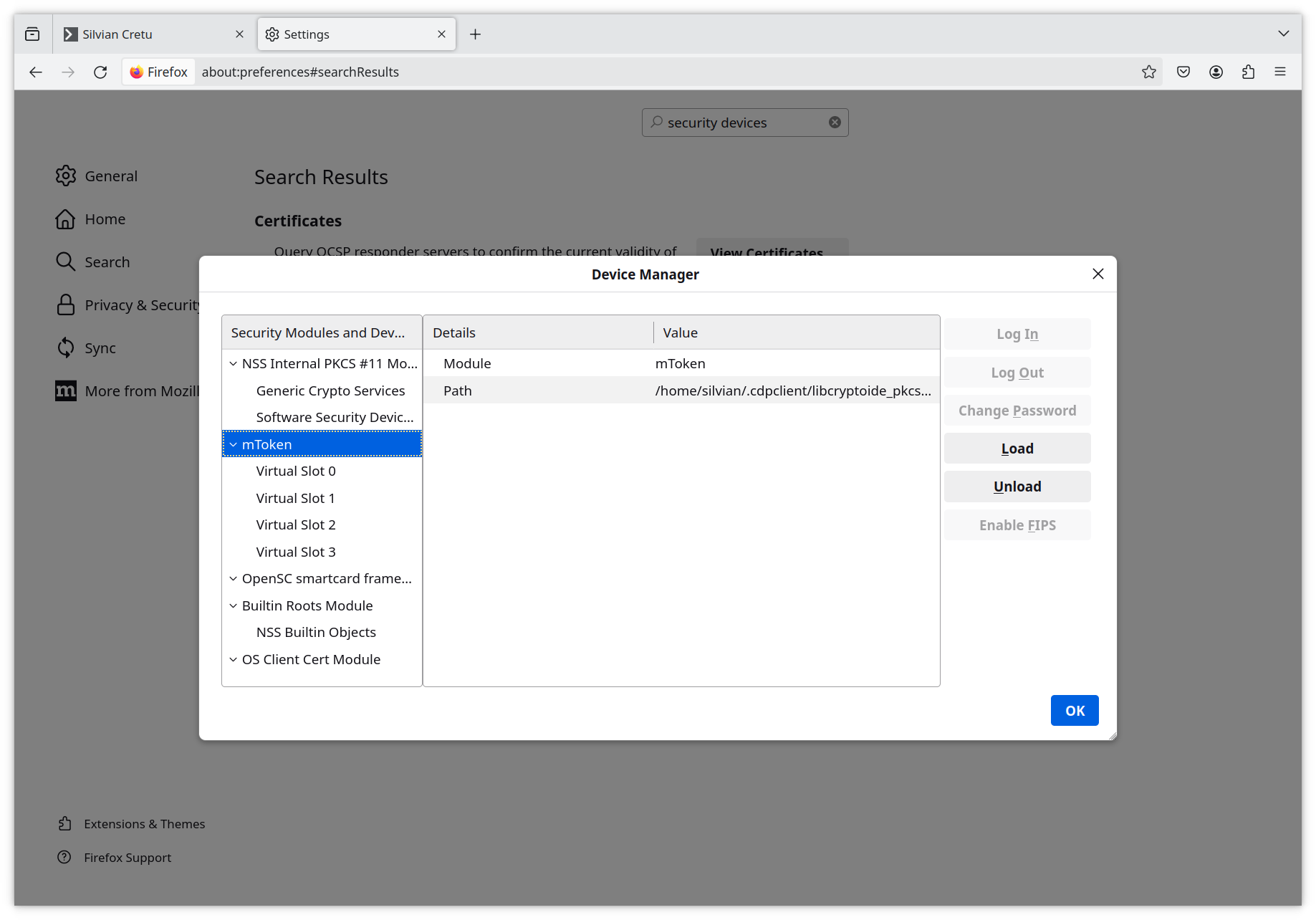

Din fereastra ce se deschide, apasă Load și mergi către directorul personal unde va trebui să intri în directorul ascuns .cdpclient și să selectezi libcryptoide_pkcs11.so. În final ar trebui să arate așa:

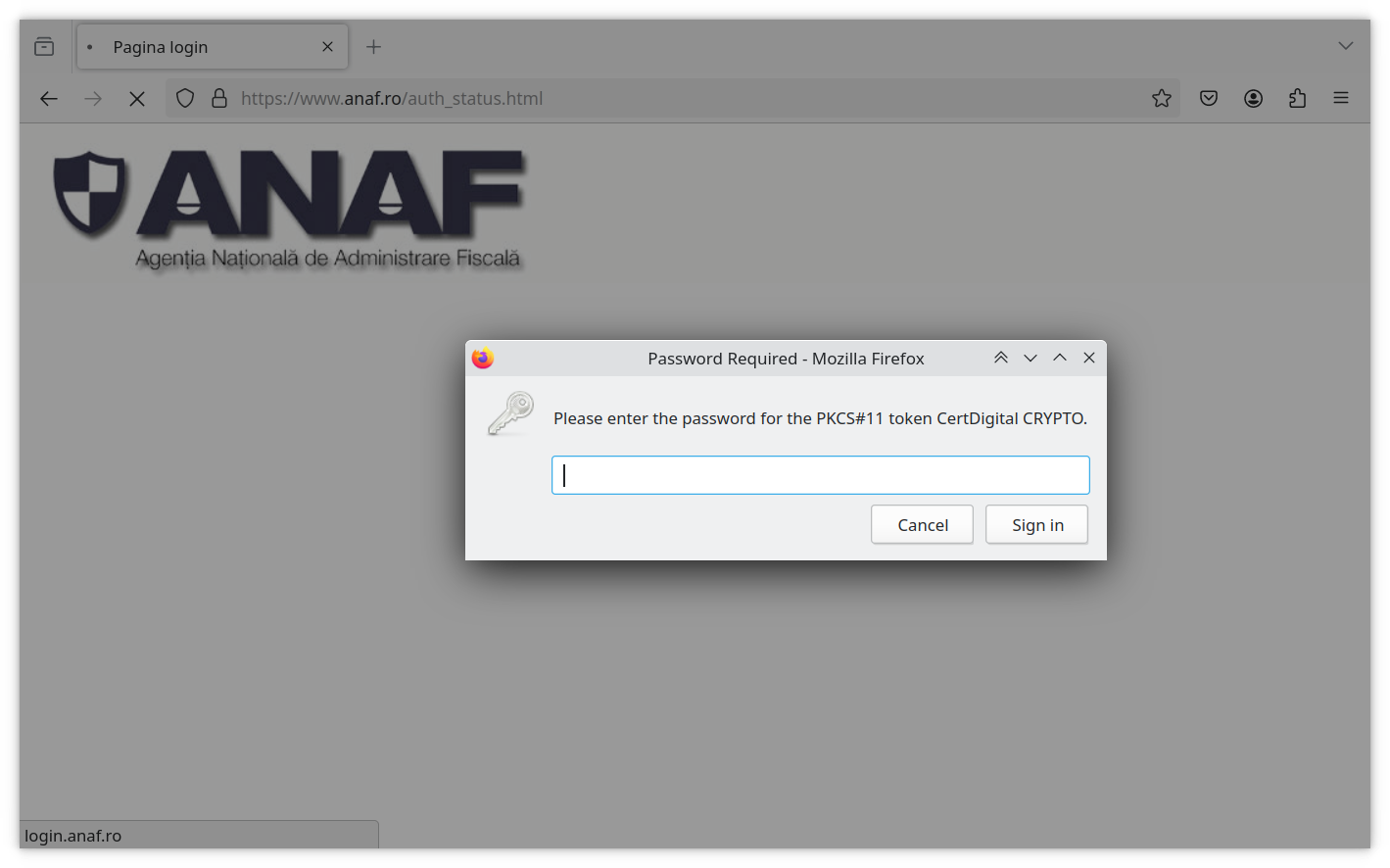

Salvează și închide. Acum, la apăsarea butonului Autentificare certificat de pe site-ul ANAF, Firefox ar trebui să ceară PIN-ul token-ului și, dacă-l primește pe cel corect, să te ducă în SPV.

Autentificarea în SPV, pe MacOS, cu Firefox

Pașii de mai jos au fost testați pe un MacBook cu procesor Intel.

Aplicația CDP Client ne dă un indiciu despre unde se află librăria lui:

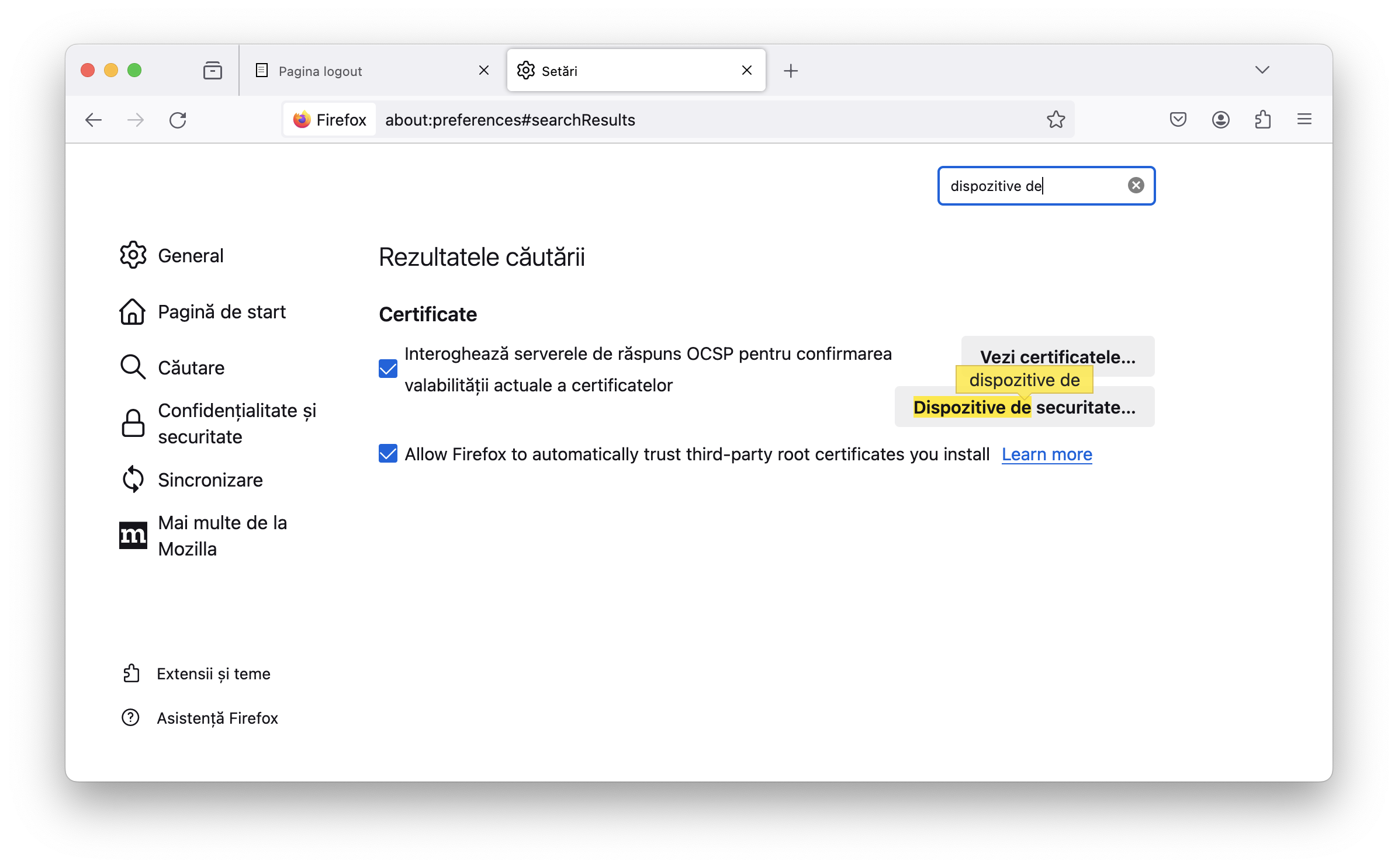

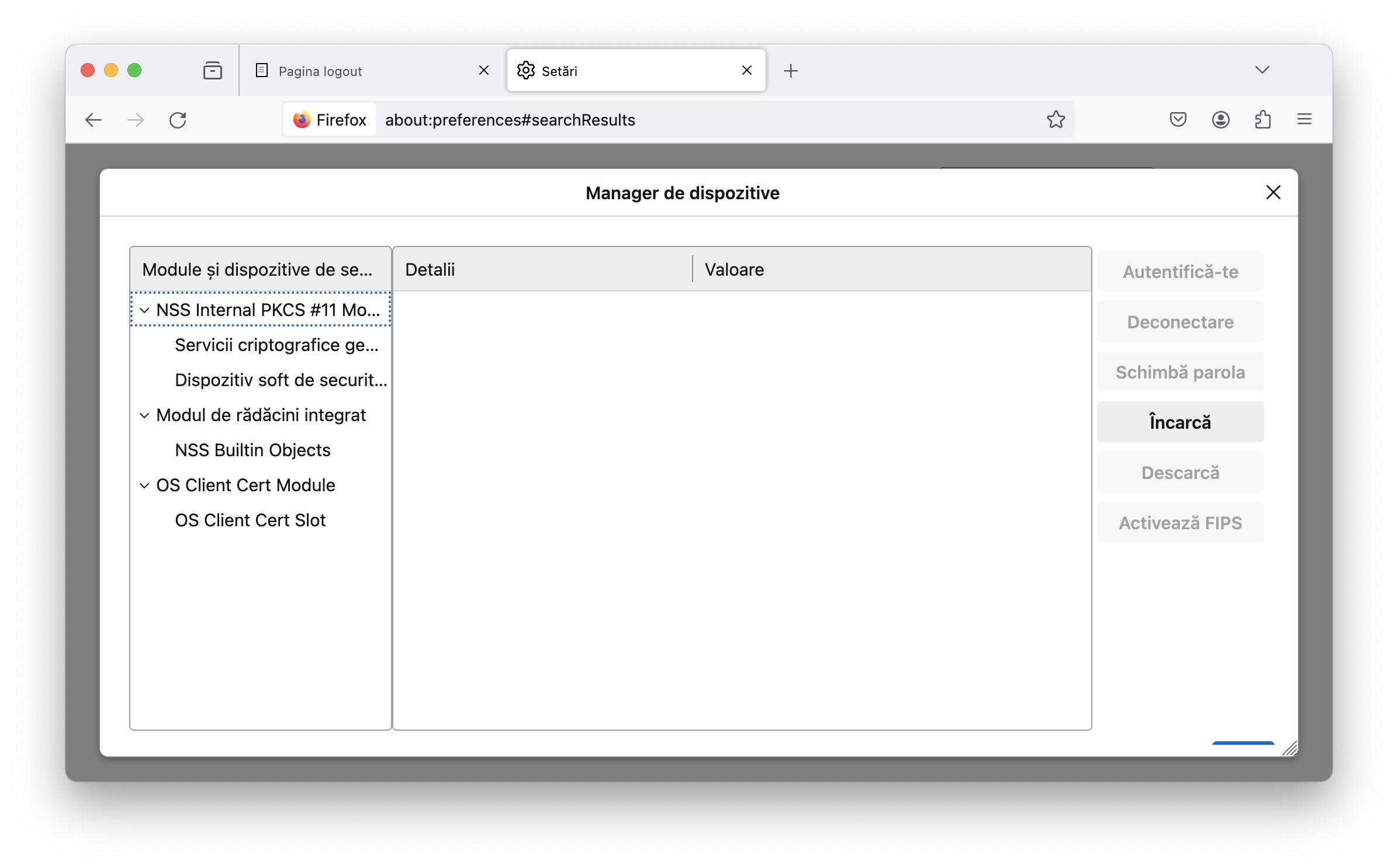

Din Firefox, deschide fereastra Setări/Settings din meniul hamburger din dreapta sus. Acolo caută Dispozitive de securitate / Security Devices.

Din fereastra ce se deschide, apasă Încarcă / Load și mergi către directorul personal unde va trebui să intri în directorul ascuns .cdpclient (e posibil să trebuiască să apeși Command + Shift + . (punct) pentru a-l vedea) și să selectezi libcryptoide_pkcs11.dylib.

Salvează și închide. Acum, la apăsarea butonului Autentificare certificat de pe site-ul ANAF, Firefox ar trebui să ceară PIN-ul token-ului și, dacă-l primește pe cel corect, să te ducă în SPV.